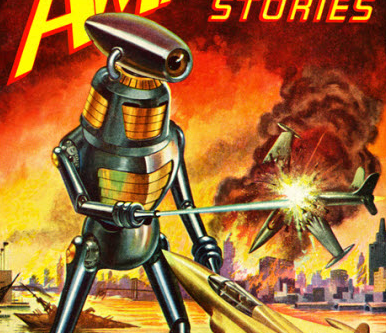

Digital Detox 2023 Preview: Robot Invasion 101

It’s hard not to be aware of the encroachment of artificial intelligences* on our teaching and learning spaces if you work in education these days. The launch of ChatGPT a few weeks ago has caught many teachers and learners off guard with its level of sophistication and its capacity to absorb the seemingly mundane writing tasks that make up, well, a lot of the homework we assign. Of course, the death knell of the essay was rung, while others have found more circumspect ways to think about this moment. Either way, it seemed like something we need to talk about.

The truth is that machine learning and algorithmic processes are already here. Students are assessed for language competency by computer and a computer assesses their progress and risk to fail. Algorithms paired with learning analytics make big claims about who our students are and how we should understand them. And computer-aided proctoring services are making critical claims about whether or not students are cheating on exams. If we trust them. And if we let them.

I’m slightly obsessed with all of this and I’m eager to think about ways we can see this as a moment of opportunity. It was the topic of my podcast this week and a webinar last week. To what extent might we be experiencing a disruption of some aspects of education that actually really needed to be disrupted, and can we imagine a version of higher education that is nimble enough to respond? At the same time, I am clear-eyed about the racist and ableist implications of many of these tools, and I’m not sure — after three years of a pandemic where harm in education has not been equitably shared — that higher education is up to the challenge of confronting them.

Over the first six weeks of January, we’ll talk about: data sets and how AI is trained, and AI practices already in place; the racist and ableist implications of these tools and their applications; AI and writing instruction; the outsourcing of teacherly judgement; the AI arms race we create when both student and evaluator has access to those tools; and whether higher education is capable of adapting to a new suite of tools.

As always the Digital Detox will focus on the world of higher education, but I think there will be much to reflect on for people in the K-12 space, too. I know I spend a lot of time thinking about how these tools and processes will impact my five-year-old’s progress through formal education.

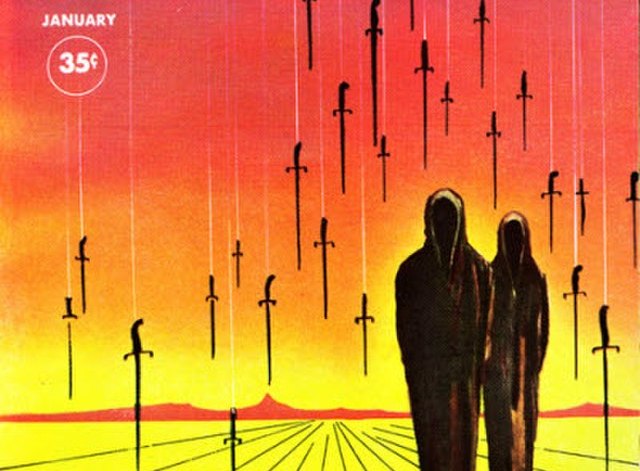

If this all sounds good to you, make sure you’re registered and join me in January. It’s going to be fun, and harrowing, and dark. But mostly fun**.

Register for the TRU Digital Detox 2024

*I’m not spending a lot of time here thinking about what is or is not artificial intelligence or the differences between how the term are used popularly and more specifically: we’ll define our own terms when things get underway. I’m talking about a constellation of algorithmic processes here that largely seek to outsource the traditionally human (and humane?) acts of teaching and learning to computers, whether that’s machine learning or AI or something else. I am no computer scientist, pals: I’m a lowly English major who just cares a whole lot. There’s room to debate me on how much this matters, though. Consider a guest submission? Your perspective will get archived as part of the Digital Detox itself.

**Relative fun level is not a guarantee.

Yes, this sounds great. I truly believe that chatGPT is just the beginning of a new era where AI is fully accessible to everyone. The AI arms race you talk about is very real and needs to be fully understood and addressed in academia. The use of AI is the new reality that we must face head-on, and AI literacy must be taught to students at all levels in order to be knowledgeable and competitive in our new world.